Originally published October 2014. Lightly edited for style, but not for content or hindsight. I resurrected it as it relates to my more recent security explorations. Apple designs might have changed significantly.

Any iOS developer could create an app that read the secrets stored by Dropbox, PayPal, or Google Authenticator. The malicious app would pass App Store validation. While these secrets had a complex security model, it was defeated by a missing server-side check in Apple’s provisioning portal.

I reported this to Apple in September 2013. I noticed the fix on October 10, 2014, 13 months later.

What is Keychain, and what are access groups?

Keychain is the system on iOS (and macOS) for secure storage of sensitive data like passwords. By default, Keychain items are bound to the app that created them: other apps cannot see them.

Sometimes a developer wants multiple apps to share Keychain entries. They can do this through a Keychain access group: every app in the group can read all items stored for that group.

How does Keychain know who can join a group?

Only apps from the same developer are supposed to share a group. This is enforced through “App ID prefixes” and provisioning profiles.

Every iOS app has an App ID, chosen by the developer. This is prefixed by a short random string, the App ID prefix, which cannot be freely chosen. Multiple apps by the same developer can share a prefix, but apps from different developers cannot. App IDs and prefixes are not secret.

When creating a provisioning profile, the Keychain access group name must include the App ID prefix, and this prefix must match the one in the provisioning profile. If they don’t match, access is denied. If they do, the app can read and write items in the group.

I can make a Keychain access group for my own apps, but you can’t join it, because you cannot select my App ID prefix. Apple will not issue you a provisioning profile with my prefix.

The vulnerability

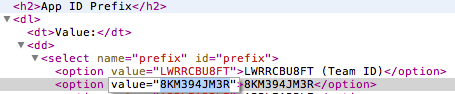

When registering an App ID, the developer selects the prefix from a dropdown. This lets them pick which of their existing prefixes to use, so their apps can share a Keychain access group.

The dropdown was implemented as a plain HTML select field. The portal assumed the browser would constrain the choices. It did not validate the submitted value on the server side. With a web inspector or a line of javascript, I could select any prefix, including those belonging to other developers.

Proof of concept with Dropbox

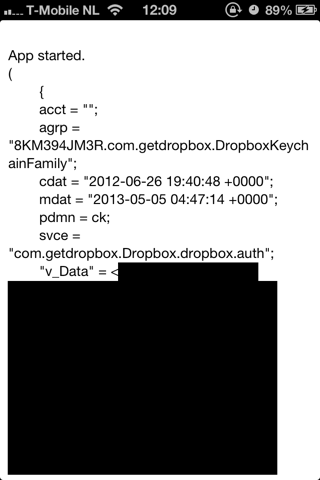

I built a small app that dumps all Keychain entries it can find. The target was the Dropbox app’s Keychain access group. (This is not a vulnerability in Dropbox: I am using it as an example.)

The Dropbox app’s entitlements show its Keychain access group:

$ codesign -d --entitlements - Payload/Dropbox.app

....

<key>keychain-access-groups</key>

<array>

<string>8KM394JM3R.com.getdropbox.DropboxKeychainFamily</string>

</array>

....

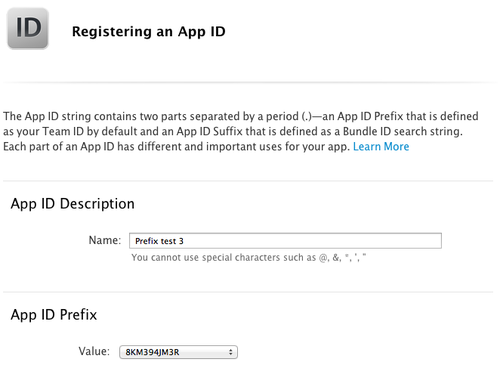

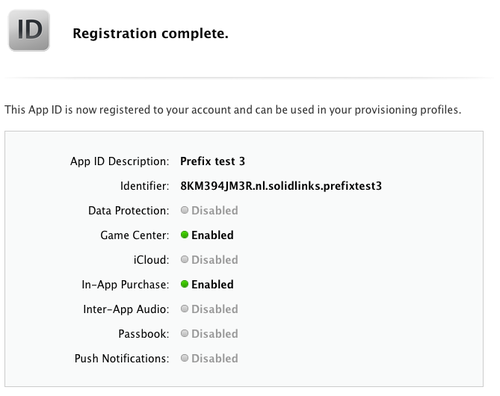

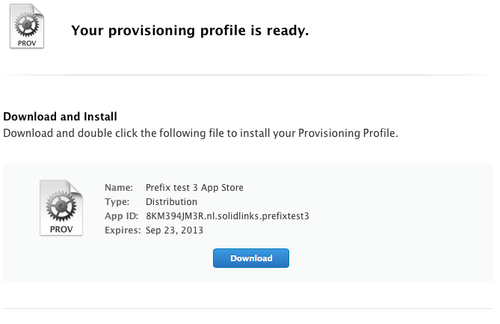

I registered an App ID with the same prefix:

The portal accepted it. This is the core of the vulnerability: it should have rejected a prefix that does not belong to my account.

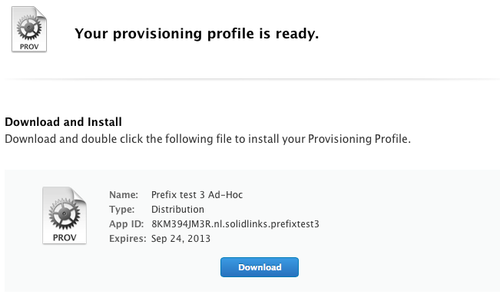

I created provisioning profiles with Dropbox’s prefix:

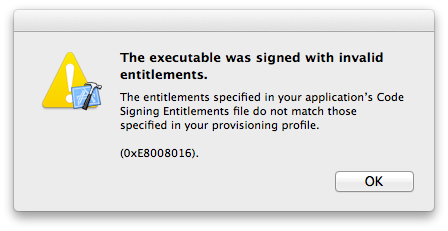

I set the same Keychain access group as Dropbox in my app’s entitlements:

Using my normal provisioning profile, Xcode correctly rejected the mismatch:

Using the provisioning profile Apple should not have given me, I ran the app on my device, which had Dropbox installed. It read the Dropbox Keychain entry, including the authentication key under v_Data:

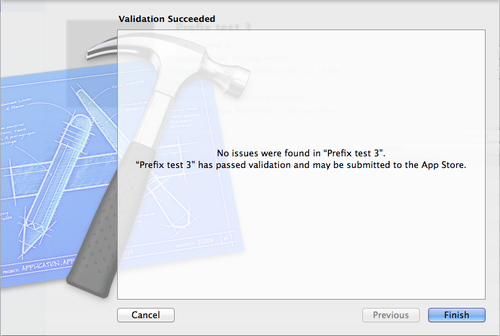

With the App Store provisioning profile, it also passed App Store validation:

What else was affected?

iOS 8 introduced App Groups for sharing data between an app and its widgets, using a mechanism similar to Keychain access groups. This likely increased the number of developers using shared groups, widening the impact.

Lessons we can learn

The entire Keychain access group security model was enforced by an HTML dropdown. The cryptographic infrastructure seemed sound, properly protected by provisioning profile. But it hinged on limiting the options in a single dropdown, which any user can easily manipulate.

Disclosure timeline

I cannot pinpoint the exact date this issue was fixed, but I noticed the fix on October 10, 2014, which was 13 months after my initial report.

Apple has never provided any credit for my work.

| Date | Event |

|---|---|

| 2013-09-01 | I report the vulnerability to Apple, PGP encrypted. |

| 2013-09-04 | Apple requests a proof of concept. |

| 2013-09-07 | I reply with a full walkthrough and further clarification. |

| 2013-10-07 | I ask for a status update. |

| 2013-10-09 | Apple replies they were unable to decrypt my last message. |

| 2013-10-13 | I resend the status update request. |

| 2013-10-21 | Apple replies they were unable to decrypt my last message (again). |

| 2013-10-21 | I resend the request. |

| 2013-10-22 | Apple replies they were also unable to decrypt the original report. |

| 2013-10-30 | I resend the full original report and clarification in cleartext. |

| 2013-11-05 | Apple confirms: the issue is being investigated. |

| 2013-12-14 | I ask for an update and reproduction status. |

| 2013-12-16 | Apple asks whether I would like credit, and in which format. |

| 2013-12-17 | I reply with credit details. I ask whether this means the issue is resolved. |

| 2014-01-13 | I ask for an update and possible timeline. |

| 2014-01-18 | Apple: “We are testing a comprehensive fix.” |

| 2014-03-14 | I ask for an update. I note a publication has been prepared. |

| 2014-03-22 | Apple: “We have no new status to report at this time.” |

| 2014-09-18 | I ask for an update. The issue has been open almost a year. |

| 2014-09-30 | Apple: “We have no new status to report at this time.” |

| 2014-10-10 | I discover the vulnerability has been fixed. |